ABOUT NEURAL SYNTHESIS AND MACHINE LEARNING

As outlined in the critical framework for the residency my decision to focus on neural synthesis rather than other forms of machine learning such as midi pattern generation is due to my interest in the generation of new raw audio material for sampling and production. I am interested in the output sonic materiality that these neural models can produce from audio datasets and what impact this has on sampling culture, abstraction, authenticity and ownership and materiality.

is neural synthesis a new technological medium for musique concrete?

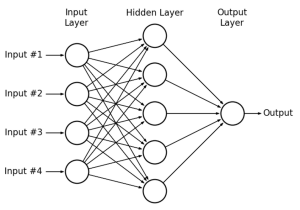

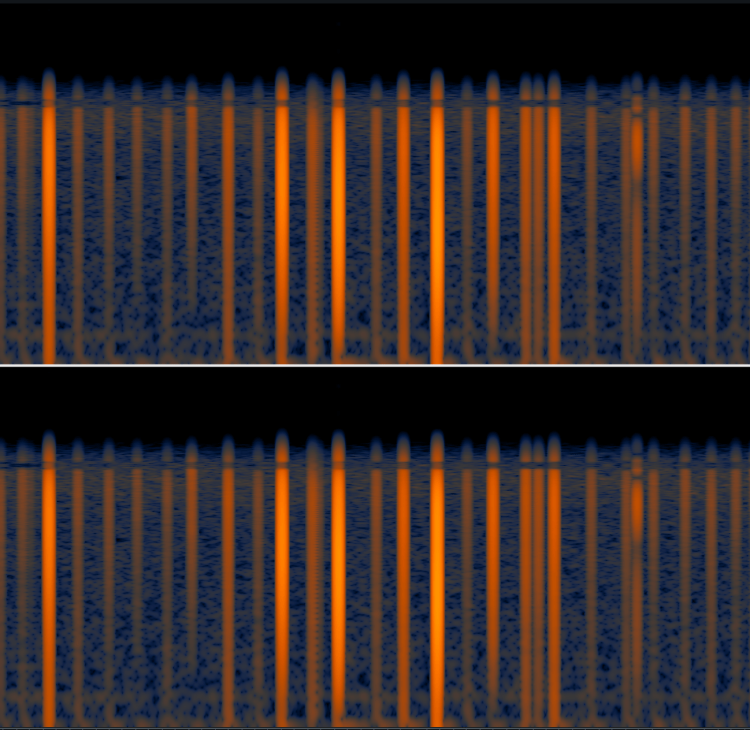

Machine learning is a branch of AI, also known as deep learning. There are different types of machine learning, some networks classify or label new information (supervised learning) and some can generate new outputs (unsupervised learning). I am focusing on a type of machine learning called Neural Synthesis – specifically a multi layered neural network model called a SampleRNN. The RNN stands for Recurrent Neural Net – In the process the model analyses the data and learns or trains on the dataset in order to detect recurrent patterns. For sonic AI in this case our data is a vast set of audio information, which is analysed statistically. In order to generate a new audio output prediction based on the inputs. the more data the model trains on, or the longer it trains on that data, the better the prediction will be (ie. a higher level of ‘accuracy’). The concept of prediction ‘accuracy’ is interesting aesthetically as I am not interested in a machine generating a replica of an audio input (ie. 10 hours of trumpet = 1 min of machine trumpet), I am interested in the nuance, the error, the ‘bits in between’ making eclectic dataset sets of disparate material sounds to eek out new materialities, architectures, forms and improvisations to create with.

My main tool of choice to start with has been PRiSMSampleRNN – however I am also going to explore other tools, connecting with AI developers and researchers to test and create audio with other models including Wavenet, RAVE and also NLP text generation tools.

AIMS | WHICH TOOLS? | ACCESSIBILITY

I am extremely privileged to be working with NOVARS, University of Manchester as artist in residence to explore these ideas, their support and expertise has been invaluable. Having an academic partner allows access to expertise, facilities and a research base that isn’t usually available to DIY noise artists like me! Working in collaboration with PRiSM, RNCM and being part of the UNSUPERVISED research group has opened possibilities to collaborate with academics, researchers and technologists which has been so vital for the project. I am acutely aware that I want to be able to share this knowledge and process with others outside academia. One aim for residency is for me to develop skills and understanding about practical sonic machine learning in order to share learnings with others in an attempt to ‘demystify’ and remove barriers of working with sonic AI. A self confessed ‘not-that-great’ coder, I am keen to explore accessible workflows and interfaces for non-specialists and beginners that are open access. Throughout the research I am collating and testing platforms for non-coders, those without specialist knowledge or access to technologists or academic partners, working towards a creative AI programme for young women I will be delivering with Brighter Sound in 2022, getting hands on making music with machine learning.

This page will become populated with the tools and techniques i’ve used throughout the residency with a focus on inclusive/accessible tools.

PRiSM SAMPLERNN

PRiSM is the centre for Practice & Research in Science & Music at the Royal Northern College of Music. I have been working with Sam Salem, PRiSM Lecturer in composition and Dr Christopher Melen, PRiSM Research Software Engineer (who developed PRiSMSampleRNN) throughout my residency working with this neural model. I undertook training on the PRiSM super-machines for my concrete dataset as well as using the open source Google Colab notebook for home ML experiments. Chris has written a great blog about the history of neural synthesis, and you can access the PRiSMSampleRNNGoogle colab here.

UNSUPERVISED

I am part of UNSUPERVISED a brand new Manchester based Machine Learning for Music Working Group. Unsupervised is a partnership/ collaboration between the two institutions of NOVARS at the University of Manchester and PRiSM at the Royal Northern College of Music. The group consists of masters and PHD level students, researchers and guest artists. It has been wonderful to be part of the inaugural group, meeting up monthly to chat about our developments, share research and talk about concepts and ideas within this field. Our first concert event was part of online Future Music 3, 2021 and we are now planning (hopefully) for live public sharing of new works in 2022.

CREATIVE PROGRAMME FOR YOUNG WOMEN

Coming April 2022

I’m excited to be working again with long term collaborators Brighter Sound on a creative AI music education programme for young women. The project will introduce machine learning, from a technical and conceptual standpoint and focus on current critical debates around data ethics and accessibility. Our focus will be on making AI artworks with a variety of accessible tools for audio and visual generation creating new artworks. The project will be open to music makers who are beginners in working with artificial intelligence.